Shadow AI Audit for 2026

Stopping Data Leaks in the Age of Autonomous Agents

bottom line up front:

• Productivity is winning the war against security, and the stakes have shifted from simple data leaks to unauthorized corporate actions.

• To protect the firm, you must provide sanctioned tools that are safer than the ones your employees are finding themselves.

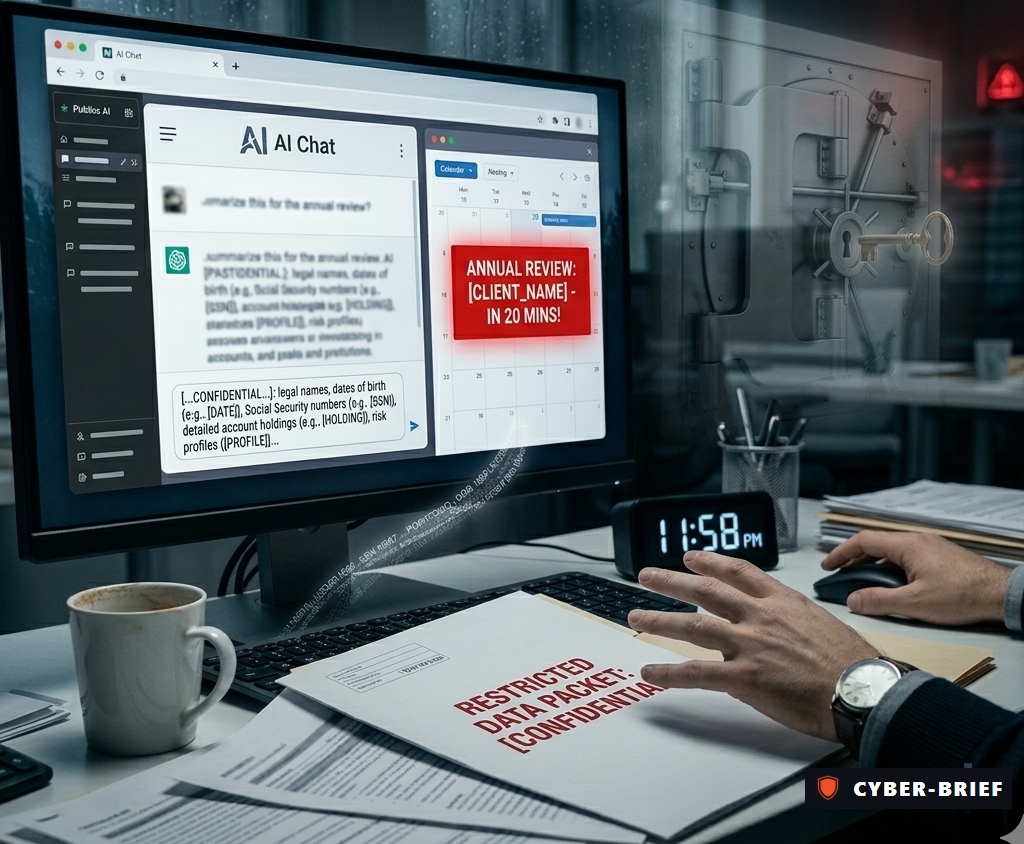

Without guardrails, an analyst in your firm is likely using a public AI tool to beat a deadline. They might be pasting a client's personal information — a file with legal names, dates of birth, account holdings, and risk profiles — into a chatbot to summarize it for an annual review. In that moment, they have handed restricted data to a third party with no contract, no audit trail, and no way to get it back.

This is Shadow AI. It is the 2026 version of an unsupervised intern with the keys to the vault.

The New Threat to Corporate Authority

For years, Shadow IT meant unauthorized apps — a personal Dropbox account here, an unapproved browser extension there. Security teams learned to manage it. But the risk has evolved into something far more consequential: Autonomous AI Agents.

Unlike a chatbot — which waits for a human to type a question — an AI agent is a system that can plan, decide, and act on its own. It can be granted ongoing access to your firm's email, calendar, internal drives, or Customer Relationship Management tool, and execute multi-step tasks without human involvement at each step.

AI agents can execute code, enter agreements, and bind firms to contracts — without a human ever signing off. Under traditional agency law, that does not matter. If it acted on your behalf, you are liable. (1)

Regulators Are No Longer Waiting

The regulatory "grace period" for AI is over. "We didn't know" is no longer a defense. Regulators now require Substantiation—documented proof that your actual AI usage matches your public disclosures. (2)

If your team is quietly using public AI while you claim to safeguard client data, regulators call this "AI Washing." Like greenwashing, this is increasingly treated as a material misstatement or a misleading representation of your compliance program. FINRA now expects you to monitor these automated actors with the same rigor as human employees. (3)

Traditional Security Tools Are Context-Blind

Standard security tools see that an employee is visiting a website, but they cannot "read" the conversation. When an employee pastes client data into an AI tool and types "analyze these holdings," it looks like any other encrypted web traffic. No alert fires, and no record is created of what was shared.

To close this gap, firms are shifting to Semantic Inspection. Unlike old tools that just watch where data is sent, these new solutions actually understand the meaning of the text. By using AI gateway proxies to inspect the content of a prompt in real time, your system can detect and block sensitive financial data before it ever leaves your internal network.

Reclaiming Control: What a 2026 Shadow AI Audit Actually Covers

Most executives assume a Shadow AI audit is simply a list of unauthorized websites their employees visited. It is not. A modern audit goes several layers deeper — and what it uncovers often surprises even the most security-aware leadership teams. Here is what your security team should be examining:

1. Unauthorized AI Tool Discovery

The starting point. A full scan of DNS logs, browser telemetry, egress traffic, and SaaS OAuth grants to map every AI tool being used inside your firm — approved or not. In most financial services firms, this surfaces dozens of tools nobody in leadership knew existed.

2. The Agentic Footprint

The most dangerous finding of a modern audit. This goes beyond chatbots to identify AI agents — automated systems that have been quietly granted ongoing Read/Write access to executive mailboxes, internal drives, CRM systems, or communication platforms. These are not one-time data pastes. They are persistent connections with continuous access to your most sensitive information.

3. PII Exposure Mapping

Once unauthorized tools are identified, the audit traces what data actually left the firm — and through which channels. This includes client names, account details, Social Security numbers, and financial records that may have been submitted in prompts over days, weeks, or months.

4. The Substantiation Gap Analysis

The audit's most strategically important output. This is a direct comparison between what your compliance policies say your firm does with AI — and what is actually happening on the ground. This gap is precisely what SEC examiners are now looking for, and closing it before an examination is far less costly than explaining it during one.

5. Shadow Workflow Identification

The final step — and the one that turns the audit into a roadmap. By identifying the specific tasks employees are using Shadow AI to accomplish, your firm can prioritize which governed, enterprise-grade tools to deploy first. The goal is not to eliminate those workflows. It is to bring them into a safe, auditable environment where they can continue — without the risk.

The Answer Is Enablement, Not Prohibition

The most resilient firms are building a Path of Least Resistance — deploying enterprise-grade AI platforms backed by Zero Data Retention (ZDR) agreements, where client data is never used to train the underlying model. When the sanctioned tool is faster, smarter, and more capable than whatever an employee would find on their own, compliance becomes the obvious choice.

It means building a formal AI tool request process and having a cross-functional AI governance board that can scale as the volume of tool requests accelerates — because it will.

Did You Know?

- The riskiest channel for sensitive data exposure isn't email or file storage — it's instant messaging. 62% of all pastes into Chat and IM apps contain PII or PCI data, and 87% of those pastes originate from unmanaged, non-corporate accounts. For security teams, this is effectively a blind spot: high-sensitivity data, moving at volume, through channels that fall entirely outside identity management and DLP controls.

- On personal AI accounts, "Paid" does not mean "Private." Even on $20/month Pro plans, conversations are used to train future models by default. Furthermore, "deleted" chats often persist for 30 days on provider servers for safety monitoring—meaning your firm's data is exposed long after the browser tab is closed.

- The average cost of a data breach in financial services is now $7.9 million — the highest of any industry. (4)

- Here's a real story similar to our intern story 👉🏻 Samsung employees leaking data through ChatGPT in 2023

Works Cited

1 - 2026 AI Legal Forecast by Baker Donelson

2 - 2026 SEC Examination Priorities

3 - 2026 FINRA Annual Regulatory Oversight Report

4 - IBM Security Cost of a Data Breach Report 2025/2026.

Deepfakes & The CEO Fraud 2.0. The rise of AI-cloned voices in wire transfer authorization.